The second critical assumption is that the effect sizes are independent. Some (transformed) effect sizes, for example, the raw mean difference and the Fisher’s z transformed score, work well even for small sample sizes when the underlying populations are normally distributed. Depending on the types of effect sizes, reasonably large sample sizes in primary studies are expected in a meta-analysis. If the sample size is large enough, the sampling variance of the effect size can be assumed to be approximately normal and known. Another factor is the size of the sample. For example, a log transformation on the odds ratio and a Fisher’s z transformation on the correlation coefficient are usually applied before a meta-analysis is conducted. For a correlation coefficient and an odds ratio, we may apply transformations to “normalize” their sampling distributions. A raw mean difference, for example, approaches a normal distribution much faster than would a correlation coefficient or an odds ratio. The first factor is the type of the effect size. Several factors may affect the appropriateness of this assumption. First, the sample effect size y i is conditionally distributed as a normal distribution with a known sampling variance v i. There are two critical assumptions in random- and mixed-effects meta-analyses. In addition to testing the significance of the moderators, we may also calculate an R 2 index to quantify the percentage of the heterogeneity variance that can be explained by adding the moderators. When there is a categorical moderator with more than two categories, dummy coded moderators may be used. Multiple moderators may be included in the model. Where β 0 and β 1 are the intercept and regression coefficient, respectively. The model can be extended to include moderators, say x i, to explain the heterogeneity of the effect sizes: When there is excess variation in the population effect sizes, researchers may want to explain the heterogeneity in terms of the characteristics of the study. Given the same value of τ 2, I 2 may become larger when the “typical” within-study sampling variance becomes smaller. It should be noted that the value of I 2 is affected by the “typical” within-study sampling variance, which is affected by the sample sizes in the primary studies (Borenstein, Higgins, Hedges, & Rothstein, 2017). We may calculate a relative heterogeneity index I 2 to indicate what percentage of the total heterogeneity is comprised by between-study heterogeneity (Higgins & Thompson, 2002).

That is, we cannot compare the computed heterogeneity variances across different types of effect size. The heterogeneity variance τ 2 is an absolute index of heterogeneity that depends on the type of effect size.

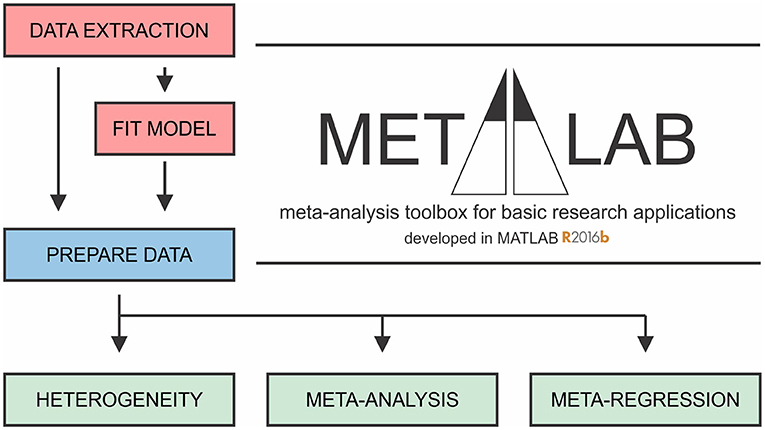

Where β R is the average population effect, Var( u i) = τ 2 is the population heterogeneity variance that has to be estimated, and Var( e i) = v i is the known sampling variance in the ith study. Applied researchers, however, may not be familiar with these advanced meta-analytic techniques.

COMPREHENSIVE META ANALYSIS METHOD SOCIAL SCIENCE HOW TO

Many advances in how to handle non-independent effect sizes have been made in the past decade. Results based on conventional meta-analytic methods are inappropriate or even misleading. This nested structure will create dependence when a meta-analysis is conducted. Another reason for non-independent effect sizes is that the effect sizes of the independent samples are nested within a primary study. For example, the same control group is used in calculating the treatment effects or there is more than one outcome effect size. The sampling errors of the effect sizes may be correlated because the same participants are involved in calculating the effect sizes. Reported effect sizes are likely to be non-independent for various reasons. It is rare for primary studies to report only one relevant effect size. Their introduction assumes that the effect sizes are independent, which is a crucial assumption in a meta-analysis. Cheung and Vijayakumar ( 2016) recently gave a brief introduction to how neuropsychologists can conduct a meta-analysis. Many books introducing how to conduct a systematic review and meta-analysis have already been published (e.g., Borenstein, Hedges, Higgins, & Rothstein, 2009 Card, 2012 Cheung, 2015a Cooper, Hedges, & Valentine, 2009 Hedges & Olkin, 1985).